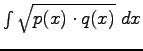

We have also tested to the use of the Matusita measure instead of the Kullback-Leibler measure as advised by [1]. The Matusita measure between two probability distributions ![]() and

and ![]() is given by :

is given by :

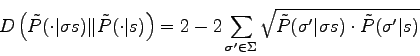

where ![]() describe the whole space again. The term

describe the whole space again. The term

is called the Bhattacharyya measure. In our case the distance becomes :

is called the Bhattacharyya measure. In our case the distance becomes :

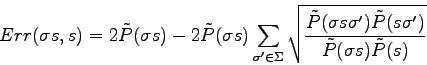

Thus :

We can notice that the computation of the Matusita measure also requires to sum ![]() terms so the two measure of distance are computationally equivalent. However, the Bhattacharyya term has nice properties such as being symmetrical or being invariant to scale in the case of two Gaussian probability density distributions. The Matusita measure inherits of these properties.

terms so the two measure of distance are computationally equivalent. However, the Bhattacharyya term has nice properties such as being symmetrical or being invariant to scale in the case of two Gaussian probability density distributions. The Matusita measure inherits of these properties.