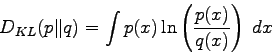

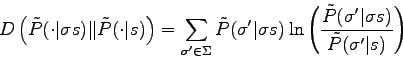

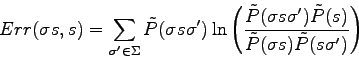

Let ![]() and

and ![]() describe two measure of probabilities, the Kullback-Leibler divergence is given by:

describe two measure of probabilities, the Kullback-Leibler divergence is given by:

This measure of a distance between probabilities has been used in [81] together with the Laplace's law of succession in order to correct corrupted texts using a variable length Markov model.