The Matusita measure between two probability distributions ![]() and

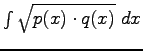

and ![]() is given by:

is given by:

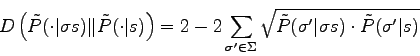

where ![]() describe the whole space again. The term

describe the whole space again. The term

is called the Bhattacharyya measure [1]. In our case the distance becomes:

is called the Bhattacharyya measure [1]. In our case the distance becomes:

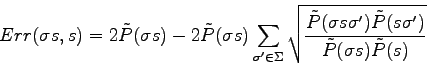

Thus:

Unlike the Kullback-Leibler divergence, the Bhattacharyya term is symmetric and is invariant to scale in the case of two Gaussian probability density distributions. The Matusita measure inherits these properties.